Data Lab

From pricing models and ETL pipelines to small trading experiments and research notebooks.

Quantitative Finance · Data & ML

Montréal, Québec, Canada

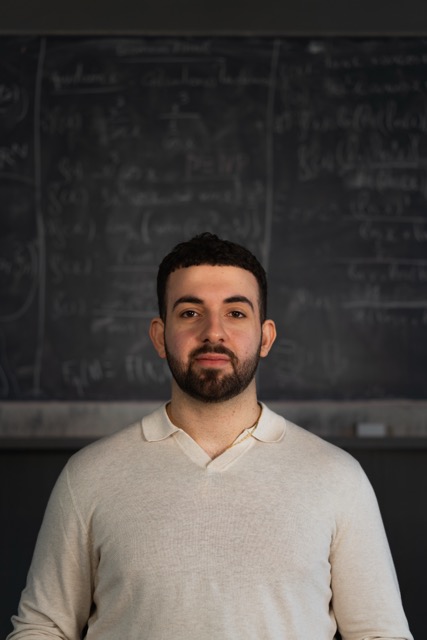

I like building tools that make data easier to understand and work with, everything from prediction models and research notebooks to small interfaces that make complex ideas feel intuitive.

At Desjardins Insurance, I work on pricing ML models and end-to-end data pipelines used in real decisions, which taught me the value of clean data, reproducibility and thoughtful engineering.

I enjoy working where data, software, and ML meet, whether that’s experimenting with trading ideas, exploring new models, or building tools that help make decisions clearer.

Data Lab

From pricing models and ETL pipelines to small trading experiments and research notebooks.

Decision Stack

Bringing together ML, data engineering and intuitive interfaces to support better decisions.

Currently building

A snapshot of how I think about quantitative finance, data and building tools that actually get used.

I’m an undergraduate in Mathematics & Computer Science at the Université de Montréal, interested in machine learning and data-driven systems. I enjoy taking a vague idea (“we should try this”) and turning it into a real project… usually powered by an unhealthy amount of caffeine and one or two existential crises.

Outside class, I’m the Treasurer of UdeM AI and I practice Brazilian Jiu-Jitsu (blue belt) — which turns out to be a surprisingly good metaphor for decision-making under pressure. I also played rugby with the Carabins (UdeM), and I enjoy thinking games like chess and poker, mostly to learn how humans (including me) make questionable decisions with confidence.

Outside the screen, I split my time between BJJ, gym, chess, poker and discovering Montréal cafés and restaurants with my girlfriend. I like learning things just for the sake of it, whether it’s a concept, a model, or a hobby I’ll pretend I have time for. It keeps life interesting.

What I’m actively working on and thinking about right now.

Formal training that underpins my quantitative and computational work.

B.Sc. in Mathematics & Computer Science

Diploma of College Studies (DEC) — Accounting and Management

Roles where I applied mathematical reasoning, analytics and financial intuition.

Self-initiated work that reflects how I explore ideas and build tools beyond the classroom.

PyTorch · Ray RLlib · PyG · Game Theory

Morgan Stanley CodeToGive · React · FastAPI · Django · RAG

C++ · STL · CMake · pybind11

Python · Streamlit · NumPy · SciPy · Plotly

PyTorch · PyG · Graph Neural Networks

Computer vision · NLP · Compilers · Game AI

A look at an exploratory research sandbox I’m building to study liquidity, competition and execution using multi-agent reinforcement learning.

Liquidity isn’t set by a single model—it’s the emergent result of makers, takers and arbs reacting to each other and to changing regimes. I wanted a sandbox to study those dynamics with controllable synthetic order books before touching any production setting.

The sandbox combines:

The environment, PPO trainer, and scripts for single-/multi-agent runs are in place. Recent runs show sensible spread widening in stress regimes, but the arbitrage agent still loses money and taker completion incentives need tuning.

Add richer regime calibration from the bundled synthetic datasets, tighten taker completion rewards, rebalance arb penalties/close bonuses, and experiment with a centralized critic for stability. Longer term: integrate attention-based GNN layers and log more reward components for diagnosis.

Small, self-contained tools that show how I think with code. Everything below runs client-side.

Quick computation of theoretical option prices and key sensitivities using Black–Scholes.

A small shelf of DL / RL / game-theory papers I revisit often, plus why they matter to my work.

The pragmatic RL workhorse. Clipped objectives and GAE make PPO stable enough for messy environments; it’s my default baseline before trying fancier multi-agent tricks.

The cleanest blueprint for sequence modelling without recurrence. I borrow its attention patterns when experimenting with order-flow encoding and when comparing graph encoders to sequence models for market microstructure.

A lightweight way to let graphs learn where to focus via attention over neighbors. It’s my go-to reference before adding relational inductive bias to financial graphs or swapping GraphSAGE for something more expressive.

What I’m comfortable using day-to-day, grouped by how I actually think about my toolbox.

Courses that shaped my quantitative and technical foundation.

Formal programs that complement my academic background.

Exam: May 2026

How I can contribute to a trading, product, or research-driven quant team.

You can get a concise snapshot of my profile in the 1-page CV and more depth (context, projects, outcomes) in the detailed version: